Research Novelty Evaluator

Find out if your research idea is truly new — before you spend months on it.

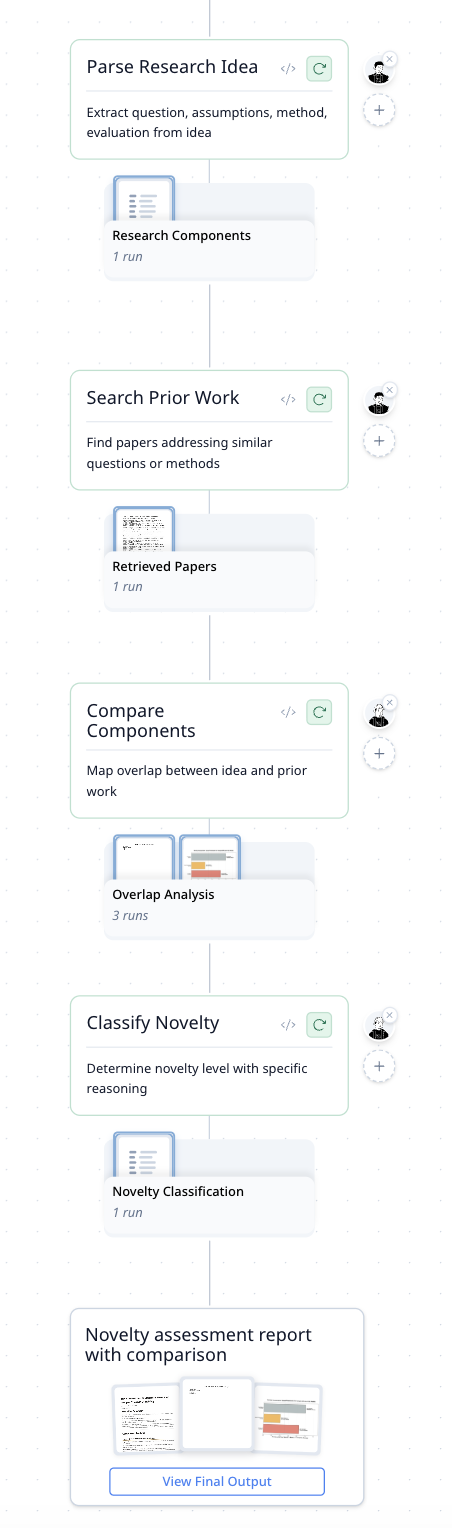

The Workflow

| Step | What It Does |

|---|---|

| Parse Research Idea | Extracts core components: research question, assumptions, method, evaluation criteria |

| Search Prior Work | Finds papers addressing similar questions or methods across academic databases and arXiv |

| Compare Components | Maps overlap between your idea and each paper — question vs. question, method vs. method |

| Classify Novelty | Rates your idea as largely redundant, incrementally new, or clearly distinct — with reasons |

How It Was Built

"Build an agent that evaluates PhD research ideas by checking how new they actually are. Break the idea into components, compare each one against prior work, and classify the novelty."

A clear problem statement — not code or technical specs. MorphMind turned it into a 4-step evaluation pipeline with literature search and visual overlap mapping.

Why This Works Better Than a Chatbot

Ask ChatGPT "is my research idea novel?" and you get encouragement. "That's an interesting direction! It could be novel because..." That's not what you need. The problem:

- It doesn't actually check — the chatbot generates a plausible-sounding assessment without searching the literature. It doesn't know what was published last month. You feel validated, then get rejected at peer review for being "incremental."

- "Similar" is too vague — tools like Google Scholar find papers with similar keywords. But keyword overlap isn't the same as contribution overlap. Your method might be new even if the topic isn't. The chatbot can't make that distinction.

- No record of what was compared — you get a paragraph of opinion with no traceable sources. Was that comparison against 5 papers or 50? Which ones? What specifically overlapped? There's nothing to verify.

| The Problem | Workflow Approach |

|---|---|

| Chatbot gives encouragement, not evaluation | Component-level comparison with specific overlap mapping |

| Keyword similarity misses method-level novelty | Compares question, method, assumptions, and evaluation separately |

| No sources, no traceability | Every comparison links to specific papers with cited overlaps |

| One vague verdict | Clear classification (redundant / incremental / distinct) with reasons |

Example Prompts

Evaluate this: I want to use causal inference to improve sample efficiency in vision-language-action models for robotic manipulation.

My PhD proposal uses graph neural networks for drug-drug interaction prediction. The novelty is combining molecular fingerprints with patient EHR features. How new is this?

I'm proposing optimal transport for single-cell trajectory inference instead of graph-based approaches. Is this distinct enough?

Frequently Asked Questions

How can I check if my research idea is novel?

This agent breaks your idea into components (question, method, assumptions, evaluation), searches academic databases for similar work, and maps exactly where your idea overlaps or diverges. You get a clear verdict — redundant, incremental, or distinct — with cited evidence.

Can AI help with PhD literature review?

Beyond finding papers, this agent compares your specific contribution against prior work at the component level. It shows which aspects of your idea already exist and which are genuinely new — more targeted than a general literature search.

What is component-level novelty analysis?

Instead of matching keywords or topics, the agent decomposes both your idea and each retrieved paper into structured components (question, method, evaluation). It then compares component-to-component to identify where real overlap exists, avoiding false positives from surface-level similarity.